The ChatGPT moment for Generative Audio

Lets talk about Audiomancy and how the way we make music is going to change

We are watching the birth of a new era of tools for generative audio creation.

Oh users of Ableton, oh creators in Logic and Fruityloops and even you on-trend Bitwig buddies,

Your world is going to be changing and we are going to be addressing that change directly.

I'm talking about music that is created by prompting a large generative model to make music. Here’s one dance-floor-oriented example:

“Sparse dub techno with occasional stabbing chords and lots of reverb, unique rippling synths, around 124bpm”

What matters is the trend line. You might not care for this specific tracks, thats of course legitimate. For starters, this current generation of technology tends to make tracks that tend to be a bit loopy and repetitive, and the sound quality still has a number of audible weak spots.

What matters is not where we are, it’s where we are going: it has quickly become quite felt-sense-true to me that in a year or three, generative audio models are going to be producing content that is indistinguishable from world-class producers. This realization is wild! and intense and so I figured I should at least start writing about it, as it seems the most responsible course of action.

What is happening here, and what does it mean for people in various capacities of the modern music and nightlife process? This is the first in a series of posts:

I, and many others in this for-now small community, would argue that some invisible line was crossed with the Suno v3 alpha model. I would further argue that v3 is the ChatGPT moment for generative audio. I want to disclose I’m entirely unaffiliated with Suno (and any generative audio company), this is just me as an electronic music producer and technologist calling things as I personally see them.

Automation, New tools, Job loss

A few positive words for the readers who consider themselves music producers: the ability to make a song in Ableton is not going to go anywhere. You will always be free to sit down and make a track and these generative models won't ever block you from doing that, and will probably help you as much as you would like to be helped. This is going to change the world that you release your tracks into, but not you or your creative expressions.

And this is going to create incredible tools for creative expression. To whatever degree an artist wants to incorporate them, it seems inevitable that we will see many new types of tools - mastering, mixdowns, vocals, stems,, basslines, drumlines… eventually, any part of the process that you don’t want to do.

Because it turns out, the artistic freedom to explore the vast range of possible sounds also is a good fit for modern machine learning techniques. More in this vein of discussion to be explored in a separate post:

And it’s worth explicitly stating that I'm not particularly clairvoyant, this prediction has some assumptions in it. It’s possible that World War III will suddenly halt all generative model research, for example, and my timeline will totally be wrong. However the trend line is unambiguously strong. It was in 2016 that WaveNet, one of the first generative audio models, was released, I see no sign that the rate of progress is slowing. If anything, the truly historical amounts of money flooding into the AI space is going to accelerate it.

“Ultra psychedelic far out ambient folk unique”

Historical Precedent

It’s important to recognize that this is how technological progress and music have interacted for the whole of human history. When the organ was invented, it allowed a single performer to do the work of large choirs. A single organist was able to make the sounds that previously required 20+ people to do.

Another example, at one point the whole band had to work together to get a track in a single take, a large creative endeavor that required complex teamwork alongside creativity. That era ended with technological advancements with multi track recording, allowing audio engineers to do many takes of each performer, stitching them together later in the process.

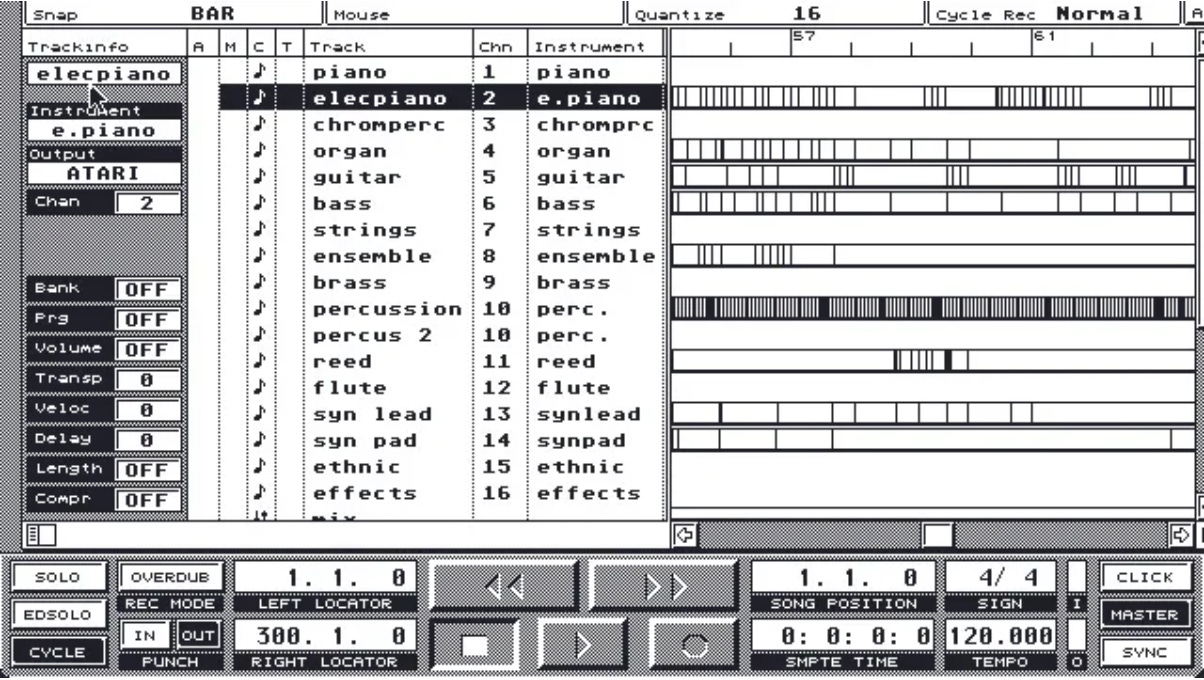

Cubase on an Apple Power Mac circa 1993

We've had 30ish years of a single human being able to craft a full track in a DAW on a computer, and I believe that history books in the future will be listing 2024 as the year in which this era began to change, as technology marches inevitably forward.

Another way to look at this is that we are moving one level up the abstraction chain. When we had 50 piece orchestras, the physics of actually making the french horn produce the sound was the responsibility of the artist. Then we moved to guitars and pianos that gave a vast range of sounds available to the performer. Then we moved to digital tools and modular rigs that let one single artist make an effectively-infinite array of sounds, and arrange them manually.

And now - now it seems that the generative audio era is beginning to open, and it will allow artists to curating a specific style and feel by sorting through very large amounts of generated audio.

Note the buildup at 0:20-28, and the new elements entering at 0:41 and 1:19

Audiomancy

Around here (at the Mars College Department of Future Music), we’ve started calling artists who work primarily generative audio tools to be ‘audiomancers.’ For example, ‘Atin is an audiomancer and DJ from San Francisco.’ I defer to ChatGPT’s definition of this colloquial expression:

The suffix "-mancer" comes from the Greek "manteia," meaning "divination" or "prophecy," and is used in English to denote a form of divination or manipulation related to the root word it follows. … It traces back to ancient practices where individuals believed they could manipulate certain elements or forces through rituals, symbols, or interactions with the supernatural.

This feels right. Audiomancers interact with non-human audio models and attempt to manipulate them into producing the exact and specific type of sound they seek.

Because what the audiomancer does is very different than what a music producer does, and I think it’s fair to keep the existing ‘music producer’ more narrowly defined for those working and creating digitally-hand-crafted material in non-generative ways. Of course, the line between will be blurry, but that is how art is, and I think we will need new terms to help with the coming changes in our world.

What lays ahead

There are some secret prompin’ techniques on this one that are saved for a future post

What lays ahead? no one knows. I have some guesses. Some are good guesses, by my own estimation. You'll need to subscribe to get future posts.

Comments and other reader feedback is welcomed too, let us connect!

One love,

-Atin

Editors Note: this text is 100% human written, without any generative agents involved at any point, beside spellcheck